Running OpenClaw on Flat Rate: Two-Tier LLM Orchestration

Running your own AI agent gives you freedom. You pick the models, the tools, the memory, the rules. You decide which MCP servers it can call, which repos it can read, which contacts it can reply to. No vendor controls the prompt, the logs, or the schedule.

OpenClaw is the open source runtime that gives you that freedom. It runs on any VM, speaks MCP, handles multi-channel bindings, keeps session state isolated per agent. I run mine on a Hetzner box with a Signal bridge, a WebChat, and a RAG over my own documentation.

Operating a setup like this can cost a lot. Not the VM. Not the storage. Not the bandwidth. The LLM.

Subscription plans from the main vendors are scoped to each vendor's own client. ChatGPT Plus runs inside ChatGPT. Claude Max runs inside Claude apps and Claude Code. Gemini Advanced runs inside Google's own surfaces. Wiring a custom self-hosted agent directly against these subscriptions is either unsupported or restricted by terms of service.

Pay-per-token stays open to any client, but the cost moves to the usage side. Deep reasoning tasks are expensive at frontier rates, and the bill scales linearly with every call.

So I went looking for a flat rate. I found one for the cheap tier. I could not find one for the deep tier. This article is about how I stopped choosing between them, and how OpenClaw ended up running on a predictable monthly bill instead of an unpredictable one.

Why Is Running OpenClaw (or Any Self-Hosted AI Agent) So Expensive?

The cost of running a self-hosted AI agent comes from the LLM, not from the infrastructure around it. Three sourcing options exist, and each has a specific failure mode.

Option 1: run an open model on your own hardware. GPU instances that could approach Claude Opus 4.6 or GPT-5 quality run from roughly $2 to $12 per hour on AWS, GCP, or Lambda Labs, depending on the GPU. An always-on agent at $3 per hour is $2,160 per month before storage, bandwidth, or redundancy. Dedicated GPU hardware is possible, but the upfront cost and the operational work (drivers, quantization, inference servers, cooling) push it outside what a solo builder can justify for a personal agent. Self-hosting the LLM locally at Claude-Code-comparable quality is not economically feasible for this use case.

Option 2: use a frontier subscription. Plans like ChatGPT Plus and Pro, Claude Max, and Gemini Advanced are scoped to each vendor's own client. OpenAI does not expose a general API under the ChatGPT subscription. Anthropic does not expose a general API under Claude Max. Google does the same with Gemini Advanced. A custom self-hosted agent cannot authenticate directly against any of these plans. The only practical exception is Claude Code, Anthropic's own CLI tool, which authenticates via the Claude Max subscription and can be driven as a subprocess by any program on the same machine. That exception is the hinge this entire article turns on, and I come back to it in the next section.

Option 3: pay per token. Every vendor also sells API access at per-token rates. This is open to any client and is what most self-hosted agents end up on. The cost follows the usage. Trivial exchanges cost little. Long-context reasoning at frontier rates is expensive on a per-call basis, and the bill scales linearly with the agent's workload.

My setup is a Hetzner VM with OpenClaw handling session routing, a Pinecone-backed RAG MCP server over my docs, and a Signal bridge. Everything around the LLM is already flat cost. Storage, compute, bandwidth, scheduled jobs. The LLM is the one line item that stays variable unless you deliberately shape it.

What Are the Two Choices, and Why Does Each Fall Short?

There are two honest options for a self-hosted agent, and neither is sufficient on its own. Flat-rate cheap models solve cost but cap your context. Pay-per-token frontier API access solves depth but reintroduces variance on exactly the days the agent earns its keep.

The approach I settled on is a flat plan for the bulk of the work, and a local Claude Code install on the same sandbox VM that OpenClaw can drive for specific high-impact tasks. OpenClaw does not authenticate against Claude at all. It spawns the claude CLI as a child process. Anthropic's guidance published on April 4, 2026 explicitly treats apps that delegate to the local claude binary as first-party usage, because the requests flow through Anthropic's own infrastructure, not through a third-party harness. That is the door the pattern walks through.

The cheap flat rate is Featherless. It gives you unlimited inference against a catalog of open models (GLM-5.1, Llama 3.3, DeepSeek V3, Gemma 4) for a flat monthly fee. No per-token metering, no invoice surprises. This is what handles the bulk of the agent's traffic.

The deep tier is Claude Code, Anthropic's CLI tool that runs Claude Opus 4.6 with native file access, MCP support, and a 1M-token context window on paid subscription plans. Under a Claude Max subscription, it is flat-rate for one developer. Because OpenClaw and Claude Code sit on the same sandbox VM, OpenClaw can invoke it with claude -p "<task>" whenever a request actually needs depth.

Here is what each tier actually gives you:

| Dimension | GLM-5.1 (Featherless flat rate) | Claude Opus 4.6 (Claude Code) |

|---|---|---|

| Cost | ~$25/month, unlimited | ~$100/month Max plan, or per-token |

| Context window | 32K tokens | 1M tokens (Claude Code on paid plans) |

| Reasoning depth | Good on routine work, thinner on multi-step | Frontier, multi-domain, tool-rich |

| File awareness | Needs manual context injection | Reads repos natively as an agent |

| Availability for self-hosted agents | OpenAI-compatible API, trivial to wire | Subprocess via the Claude Code CLI |

A note on the 1M number. Claude Opus 4.6 ships with a 200K context window in API general availability, plus an optional 1M beta on the Claude Developer Platform. Claude Code on Pro/Max/Team/Enterprise plans gets the full 1M window by default. Because this pattern drives Claude Code via the CLI under a Claude Max subscription, the elite worker runs with 1M tokens, not 200K.

Running only GLM-5.1 works until a task needs to read more than a handful of files or analyze a document longer than a few thousand words. The 32K ceiling shows up as truncation, shallow answers, or silent quality loss on exactly the tasks worth paying for.

Running only Claude Code under a subscription works until OpenClaw starts firing autonomous tool calls on its behalf. Every trivial greeting, status check, or RAG lookup draws from a quota designed for one keyboard. The quota is not the problem. Wiring a loop into the quota is the problem.

Most hosted agents solve this with tiered routing and a pay-per-token escalation to the frontier tier. It works, and it is the industry default. On a self-hosted VM with a personal agent, pay-per-token reintroduces the exact variance you were trying to avoid.

What Is the Two-Tier Flat-Flat Pattern?

The two-tier flat-flat pattern runs two flat-rate subscriptions in parallel, one per role. A cheap dispatcher handles volume. A frontier elite worker handles depth. The dispatcher decides, per message, which tier fits. Neither tier charges per token.

The dispatcher is GLM-5.1 on Featherless, served via OpenAI-compatible API to OpenClaw. 32K context is enough for 90 percent of the traffic I send through the agent. Cost stays flat no matter how many messages I push.

The elite worker is Claude Code, authenticated through a Claude Max subscription and invoked as a subprocess with claude -p "<task>". 1M context, native file access, MCP support. Cost stays flat no matter how many heavy tasks I delegate to it.

Three properties of Claude Code make this possible:

- It is a CLI. You install it once with

npm i -g @anthropic-ai/claude-code. Any program that can spawn a subprocess can drive it. In headless print mode (claude -p "<task>" --output-format json --max-turns N) it returns a structured result and exits. - It authenticates via portable subscription credentials. Claude Code stores OAuth state in

~/.claude/.credentials.json. That file is copyable. Authenticate once on your laptop,scpthe credential to any machine you control, and Claude Code on the second machine uses the same subscription. No API key, no per-token billing, no second seat. - It speaks MCP natively. Any MCP server you expose via a

.mcp.jsonfile in the working directory becomes a first-class tool during its reasoning turns. The same Pinecone-backed RAG server my dispatcher uses is available to Claude Code with three lines of config.

Put these together and the dispatcher and the elite worker share a filesystem (/opt/kchain-website on the VM) and a RAG (the same MCP server, called by both tiers). Context does not need to be serialized across tiers. Both read the same bytes.

The dispatcher handles greetings, status checks, single-file questions, RAG lookups. When a message asks for something that needs depth (a multi-file audit, a 5,000 word document analysis, a multi-step architectural plan) the dispatcher calls a tool named delegate_to_claude_code(task). The subprocess runs under the Claude Max subscription, reasons with up to 1M tokens of context, calls the same RAG server the dispatcher was calling, returns a result, exits. The dispatcher passes the result back to the user.

You are not paying per token at either tier. You are paying two flat fees, one per role. The cheap tier absorbs volume. The expensive tier absorbs depth.

What Does the Architecture Look Like?

The architecture has five moving pieces: the dispatcher agent, the RAG MCP server, a delegation MCP wrapper, Claude Code as a subprocess, and a shared repo filesystem that both tiers can read.

flowchart TB

User[User<br/>Signal or WebChat] --> Gateway[OpenClaw Gateway<br/>systemd user unit]

Gateway --> Dispatcher[Dispatcher agent<br/>GLM-5.1 via Featherless<br/>32K context]

Dispatcher -->|simple queries| RAG[kchain-knowledge MCP<br/>Pinecone vector search]

Dispatcher -->|complex tasks| Wrapper[claude-delegate MCP<br/>tool: delegate_to_claude_code]

Wrapper -->|spawn subprocess| Claude[Claude Code CLI<br/>Opus 4.6, 1M context<br/>Claude Max subscription]

Claude --> RAG

Claude --> Repo[/opt/kchain-website<br/>full repo read/write]

Claude --> Wrapper

Wrapper --> Dispatcher

Dispatcher --> Gateway

Gateway --> User

style Dispatcher fill:#37326E,color:#fff

style Claude fill:#14B8A6,color:#fff

style RAG fill:#f5f5f5

Two design choices matter more than the rest.

Same RAG, two consumers. The dispatcher calls search_knowledge for factual lookups. Claude Code calls the same MCP server during its own reasoning turns. Context is not duplicated, re-indexed, or serialized. Both agents ground on the same source of truth. This is why I put the Pinecone-backed RAG MCP server on the VM rather than inside either tier. It is a shared service.

Subprocess isolation, shared filesystem. Claude Code runs in a child process with its own working directory and tool set. It reads the same repo bytes as the dispatcher. This is the cleanest way to give the elite worker deep context without inventing a cross-process message format. Each delegation spawns a fresh Claude Code process with a clean context. Cold start is 2 to 4 seconds. For the kind of deep tasks that get routed here, that latency is fine.

The delegation MCP wrapper is tiny. It exposes one tool to the dispatcher, delegate_to_claude_code(task, maxTurns, timeoutSeconds), and internally it runs:

cd /opt/kchain-website

timeout "$TIMEOUT" claude -p "$TASK" \

--output-format json \

--max-turns "$MAX_TURNS" \

--permission-mode acceptEdits

That is the whole integration. No API key handling. Claude Code finds the subscription credential at ~/.claude/.credentials.json automatically. Every invocation is logged to /var/log/claude-delegate/ with the full task text, runtime, and exit code, so forensics take 30 seconds.

How Does the Dispatcher Decide When to Delegate?

The dispatcher decides by following an explicit routing policy in its system prompt. Without rules, a reasoning model given a powerful tool will either delegate everything or nothing. A tool description alone is not enough.

I tested this directly. A tool description that said "use this for complex tasks" triggered delegation on roughly 40 percent of incoming messages, including greetings. The model reasoned that "complex" was subjective, so it delegated to be safe. The subscription quota melted inside a day.

The fix is positive and negative examples, written in the system prompt. Here is the shape of the policy I run:

ALWAYS delegate to Claude Code when:

1. The user asks for "deep analysis", "architecture review",

"code audit", "refactor plan", or similar keywords.

2. The task requires reading more than 3 source files.

3. The task involves analyzing a document longer than 2000

words of technical or legal content.

4. The task requires multi-step reasoning across more than

2 distinct domains (code plus legal, architecture plus

business strategy).

5. The expected answer is longer than 800 words and must

be precise.

NEVER delegate when:

1. It is a simple RAG lookup. Call search_knowledge directly.

2. It is a single-file question. Read the file and answer.

3. It is a greeting, acknowledgment, or status update.

4. The answer fits in fewer than 200 words.

5. The user already answered themselves in context.

Before delegating, run at most 2 search_knowledge calls to

confirm the task is not already answered by the RAG.

When delegating, tell the user explicitly: "Routing this to

Claude Code for deep analysis, this may take up to 30 seconds."

After installing this policy, delegation rate dropped from around 40 percent to around 5 percent on my Signal and WebChat traffic. The dispatcher still handles everything it can. Claude Code only runs when the task actually needs 200K of context or multi-step reasoning.

How Much Does This Actually Cost?

The two-tier flat-flat pattern costs $125 per month for a dispatcher plus a deep worker, regardless of how hard you use the agent. That is $25 for Featherless (GLM-5.1 unlimited) plus $100 for Claude Max (Claude Code under subscription auth).

To make this concrete, compare three scenarios.

Scenario A: pure pay-per-token with Opus 4.6 on everything. Assume 100 interactions per day, 500 tokens in and 800 tokens out each, at Opus 4.6 rates (April 2026): $15 per million input, $75 per million output. Daily cost is $6.75. Monthly baseline is around $200. Every deep analysis adds a marginal cost of roughly $0.75 per task. Three deep tasks per day push monthly spend past $270. Pay-per-token is linear in usage, and it is the heavy days (the days you actually need the agent) that make the bill jump.

Scenario B: pure flat-rate cheap model, GLM-5.1 via Featherless. Around $25 per month. Unlimited inference, no surprises. Context window is 32K tokens, which is the operational ceiling. Anything longer (a regulatory PDF, a three-file cross-reference, a long chat history) either gets truncated or fails. You pay nothing for a busy day, but you cannot do the 5 percent of tasks that need deep context. Those tasks either do not happen or get pushed back to your personal workstation, manually.

Scenario C: two-tier flat-flat with delegation. $25 for GLM-5.1 on Featherless, $100 for Claude Code on Claude Max, $125 total per month, flat. What you buy for that:

- Predictability. Heavy weeks cost the same as light ones. No budget alerts.

- A 32K-context, always-on dispatcher for trivial and medium work. No per-call cost, so the agent can run as a Signal bot, a WebChat assistant, and a cron-driven watcher simultaneously.

- A 1M-context, deep-reasoning elite worker on tap. Feed it a 40K-token document, ask for an audit across 15 files, request a long-form plan. Same $125 monthly fee whether you trigger it twice a day or twenty times.

The pay-per-token equivalent of Scenario C's delegation rate, assuming 5 delegations per day at 10K input and 2K output each, is roughly $45 per month at Opus rates. So on a quiet month, Scenario C pays $80 more than pay-per-token would have cost for delegations alone.

The inversion happens on heavy days. One long document audit at 50K input and 10K output costs about $1.50 at Opus rates. Two such tasks in a month add $3 on top of the $200 baseline, pushing Scenario A above $200. Run three deep audits in a single week and Scenario A moves past $220. Scenario C stays at $125 no matter what.

The decisive factor is variance, not average. Pay-per-token is linear. Subscription is flat. If your workload has spikes (document analysis, architecture review, long-form writing, multi-file audits) flat rate wins on exactly the days the agent earned its keep. You never decide in advance whether a task is "worth the money". The dispatcher decides. The delegation happens. The accounting does not change.

What Are the Limits of This Pattern?

The pattern has real limits. It is not a universal answer. Four constraints are worth knowing before you commit.

Cold start latency. Every claude -p invocation spends 2 to 4 seconds spinning up the Node runtime, loading the credential cache, and connecting to MCP servers. Add 5 to 30 seconds of reasoning on top. Total wall clock for a delegated task is 7 to 35 seconds. Fine for deep analysis. Wrong for conversational latency targets.

Subscription is single-user by design. Copying credentials between two machines technically works, but Anthropic treats subscriptions as personal. A team deployment where many users share one subscription violates the intent. This pattern fits a solo builder running a personal agent. It does not fit a SaaS backend serving third parties.

Over-delegation risk. Reasoning models given a powerful tool tend to overuse it. Without explicit positive and negative examples in the system prompt, the dispatcher will route 30 to 50 percent of traffic to Claude Code instead of 5 percent, and the subscription quota will hit the ceiling fast. The routing policy is not optional.

Prompt injection on file writes. Claude Code with --permission-mode acceptEdits can modify files on the VM. If the dispatcher is exposed to untrusted users, a crafted prompt could instruct the elite worker to exfiltrate or tamper. On a personal sandbox this is acceptable. For anything user-facing, run Claude Code with --permission-mode plan so every mutation requires human approval.

Want help designing a self-hosted AI agent that runs on predictable flat fees? I help teams build knowledge systems and multi-model orchestration patterns for blockchain and trust infrastructure use cases.

Request a Free ConsultationThe Question Behind the Pattern

The pattern started from a frustration I share with most builders I talk to. I wanted the benefits of a frontier model without the shape of its billing. I wanted predictability, deep context, and a cheap baseline on the same agent, on the same VM. The industry answer was "pick one". I was not willing to.

The shift that made this work was realizing Claude Code is not only a coding assistant. It is a subprocess-friendly reasoning engine with portable subscription auth and native MCP support. Once you see it that way, the delegation pattern writes itself. Any cheap dispatcher that can spawn a subprocess can hand off to it. Any shared RAG can be consumed by both tiers. Any repo on disk is available to the elite worker without serialization.

I used this pattern on my personal agent, but the same architecture applies to any self-hosted reasoning system that needs predictable costs and occasional depth. It is not about the specific models (GLM-5.1 and Opus 4.6 are convenient, not required). It is about the structure: two flat rates, one dispatcher, one elite worker, shared context.

The interesting question is not which model is cheaper. The interesting question is who decides when the task justifies the deep tier. If the decision lives in the dispatcher's system prompt, the economics work. If the decision lives in your head and you have to manually escalate, the agent has become another tool you babysit.

I wanted an agent I could leave running. This is what leaving it running looks like.

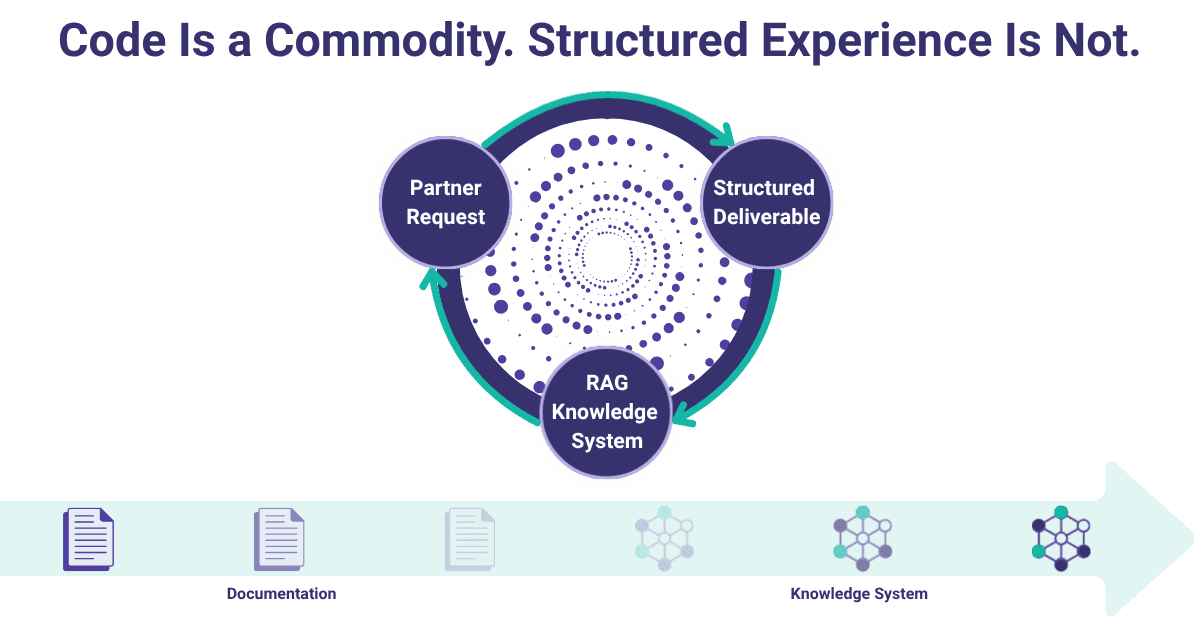

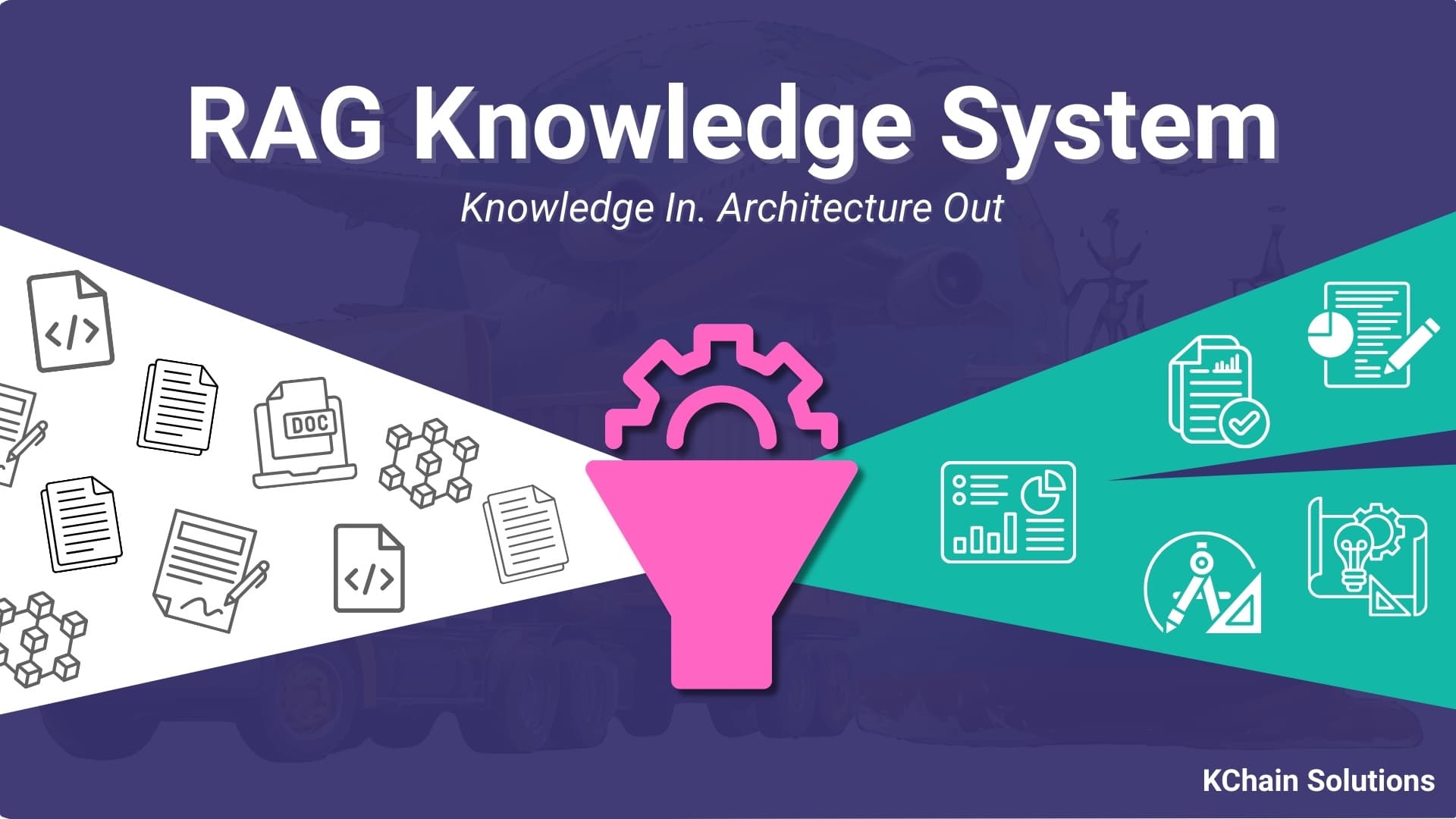

For the infrastructure side of this kind of setup (how the RAG, the OpenClaw gateway, and the MCP servers fit together on a single VM), the RAG knowledge systems post walks through the retrieval layer I use as the shared tier here. If you are evaluating where to host trust infrastructure that feeds an agent like this, the blockchain vs cloud decision framework covers the cost model I apply to the rest of the stack.

Need help implementing AI?

Schedule a free consultation to explore how KChain Solutions can help your organization implement production-grade blockchain architecture.

Valerio Mellini

Founder & IOTA Foundation Solution Architect

10+ years in software architecture across Accenture, PwC, Wolters Kluwer, and Ubiquicom. Certified Blockchain Solutions Architect. Helping enterprises implement production-grade blockchain systems with architecture-first methodology.