RAG Knowledge Systems: The Real Competitive Edge in Blockchain Consulting

The Blockchain Consulting Time Tax (And Why I Built a RAG System)

Here's the uncomfortable truth: when a blockchain consultant bills $300/hour, clients often end up paying senior rates for tasks that are increasingly automatable.

If a consultant asks ChatGPT "how does IOTA Identity handle DID revocation?" during a discovery call, the issue isn't the tool - it's the process. Retrieval is no longer scarce. Judgment is.

Human time is the most expensive and least scalable resource in consulting. Every architecture assessment follows the same initial bottleneck: understanding client scope and requirements, reviewing documentation, researching regulatory updates, synthesizing patterns from past projects.

I've done this for 10 years. The research phase is valuable - it's how expertise is built. But repeating the same retrieval-heavy cycle for every project doesn't scale. Clients end up paying senior rates for foundational analysis that a well-structured knowledge system could handle as a first layer.

So I built a RAG system. Not for hype - but for leverage. To automate the repeatable part of expertise, and free up human time for what actually requires architectural judgment, trade-offs, and accountability.

Why We Built a RAG System (Not Just Used ChatGPT)

Everyone has access to GPT-4. Everyone has Claude. The model is commoditized.

The competitive advantage is the knowledge you feed it.

A general-purpose LLM doesn't know that IOTA's Gas Station pattern abstracts transaction costs from end users. It doesn't know that our DPP implementations use hierarchical namespaces for multi-tenant supply chains. It doesn't know that we've documented 24 months of IOTA Identity architecture decisions.

And when you ask it something it doesn't know, it hallucinates. Confidently. With citations that don't exist.

Here's a concrete example: ask most LLMs about IOTA and they'll tell you it's a "feeless blockchain." It's not. Every transaction on IOTA costs approximately 0.005 IOTA. The Gas Station pattern abstracts these costs from end users, but the fees exist. If your consultant's architecture proposal is based on "zero transaction costs" because that's what ChatGPT told them, you have a problem before you've written a single line of code.

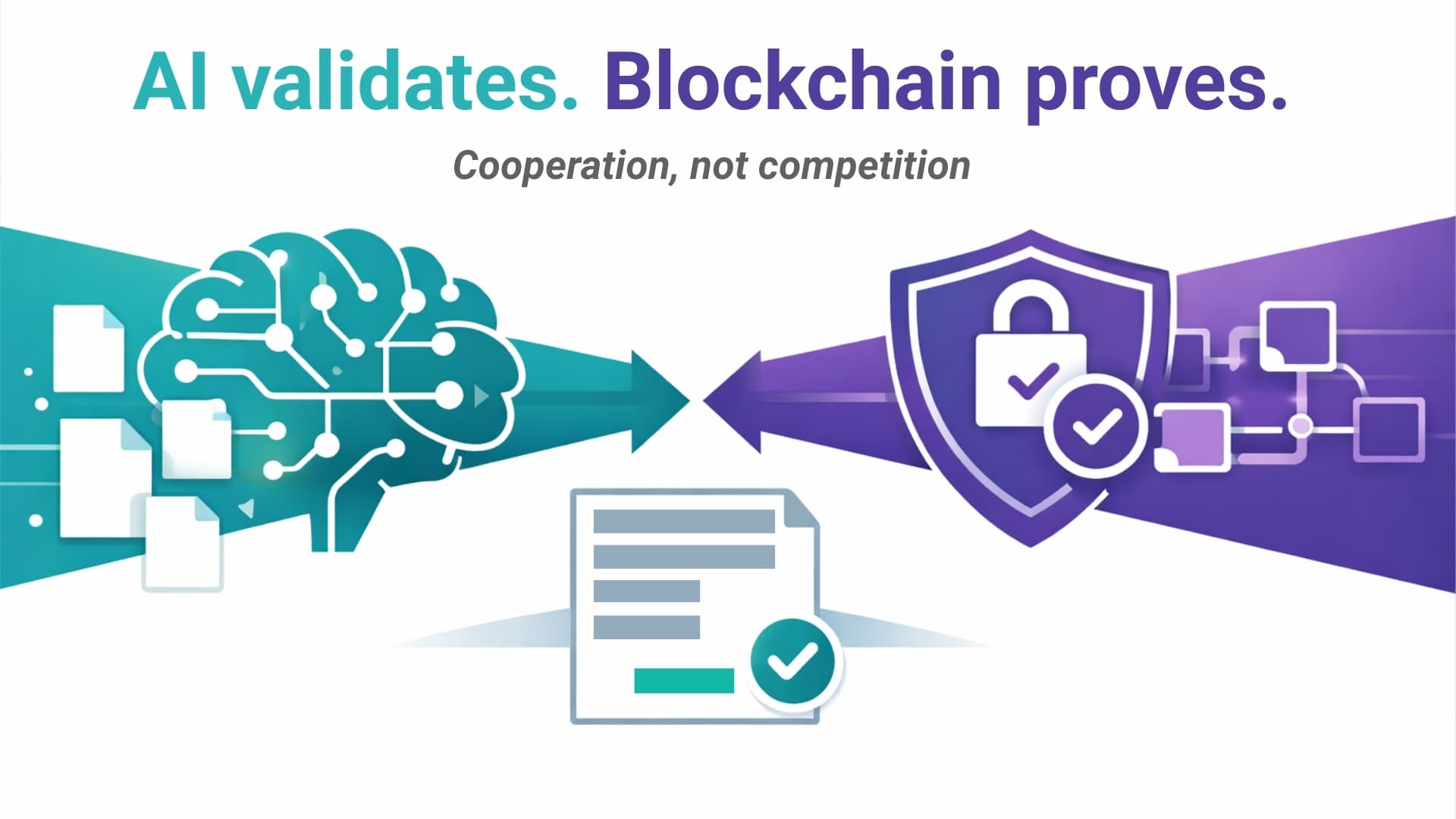

This is the kind of domain-specific accuracy that a general-purpose model simply cannot guarantee. You need a system that retrieves verified facts before generating answers.

What Is a RAG System (And Why It Matters)

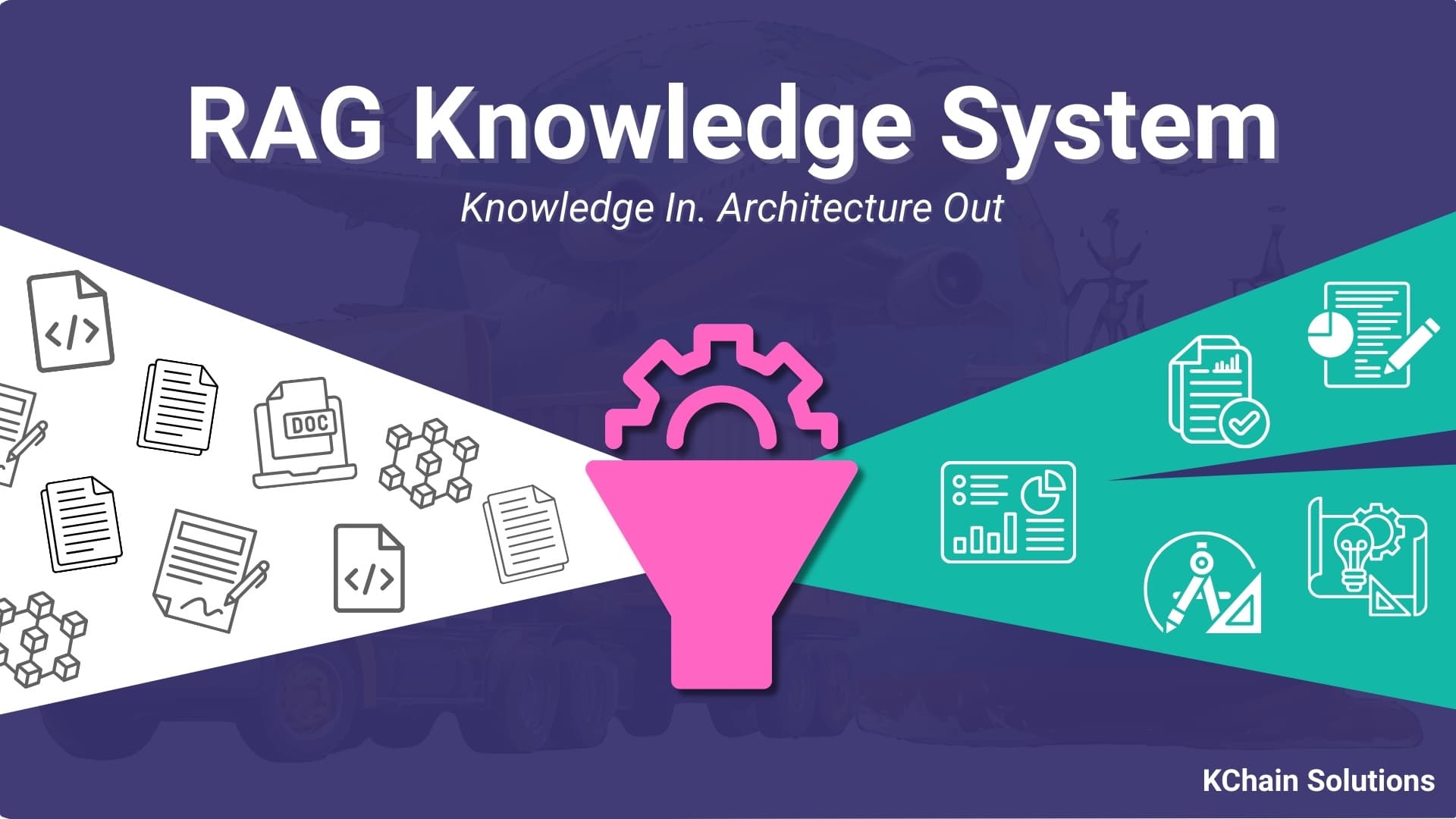

RAG stands for Retrieval-Augmented Generation. The concept is straightforward: before an LLM generates a response, it first searches a curated knowledge base and retrieves the most relevant documents. Then it reasons over those documents to produce an answer.

Think of it like this: instead of asking a very smart generalist a question and hoping they remember correctly, you're asking that same generalist to first read the 10 most relevant pages from your private library, then answer. The source material is right there. Verified. Cited. Up to date.

RAG architecture diagram - courtesy of Pinecone

RAG architecture diagram - courtesy of Pinecone

The LLM still does the reasoning. But now it's grounded in verified, source-attributed documentation. Every answer includes provenance: "This is from our August 2025 architecture consulting review."

No hallucinations. No billing clients for research time. No consultant forgetting lessons learned 18 months ago. And no LLM confidently stating that IOTA is feeless because that's what it learned from outdated training data.

What's Inside KChain's Knowledge Base

Transparency matters. Here's what's indexed:

IOTA Developer Documentation (400+ pages, auto-synced from GitHub)

- IOTA Trust Framework technical specs: Identity, Notarization, Hierarchies, Gas Station, Secret Storage

- GraphQL RPC documentation for on-chain queries

- Move smart contract patterns, transaction lifecycle, epoch mechanics

- Reference implementations and SDK examples

Past Architecture Decisions

- Every IOTA Trust Framework integration we've scoped

- Component selection rationale (when to use Secret Storage vs cloud HSM)

- Performance benchmarks from real projects

- What didn't work (and why)

Partner Feedback & Lessons Learned

- Questions from actual discovery calls

- Client pain points that recur across industries

- Compliance requirements specific to EU DPP regulation

- Integration patterns for ERP/PLM systems

Strategy & Regulatory Context

- Positioning documents, messaging architecture, pricing models

- GDPR/eIDAS analysis for identity systems

- Supply chain regulatory timelines (ESPR, CBAM, EUDR, battery passports)

Published Content

- Blog posts like our IOTA Trust Framework technical breakdown

- Case studies with implementation details

This isn't a chatbot. It's institutional memory. Searchable. Cited. Updated weekly.

How This Changes the Architecture Process

Before RAG:

- Client asks: "How could we implement an audit trail scenario on IOTA?"

- I spend 30 minutes re-reading IOTA Notarization docs, reviewing past audit trail architectures, checking which patterns worked and which didn't

- I sketch an initial approach from memory

- Client gets billed for research time before we even start refining

After RAG:

- Client asks the same question

- RAG retrieves: IOTA Notarization patterns, an audit trail architecture from a similar manufacturing project, compliance notes on data retention requirements, and integration examples with existing ERP systems

- In seconds, I have a baseline scenario adapted to the client's industry

- I spend the entire session refining the architecture for their specific use case, not rebuilding context from scratch

Human time is limited. RAG is extremely scalable.

And here's the part most people miss: every iteration makes the system smarter. When I refine that audit trail architecture with the client and document the decisions we make, those insights go back into the knowledge base. The next client with a similar question gets an even better starting point. Each engagement compounds into the system's performance.

This is the shift. Junior architects can query the knowledge base and get senior-level context instantly. Clients get faster, better-grounded answers. I stop re-solving problems I've already solved. And the knowledge base gets richer with every project.

And here's the part that makes this critical for IOTA Trust Framework adoption: RAG drastically lowers integration costs.

When a manufacturer asks, "How do we implement Digital Product Passports on IOTA?", the RAG system pulls:

- Technical architecture patterns from our reference implementations

- Cost estimates from past DPP projects

- Regulatory compliance checklists (ESPR Annex I, CBAM data requirements)

- Integration points with existing ERP systems (SAP, Oracle, Microsoft Dynamics)

The discovery call becomes a refinement session, not a research sprint. We're not starting from zero. We're starting from "here's what worked for similar organizations."

This is how you make complex technology accessible. Not by dumbing it down. By structuring knowledge so it can be retrieved on demand.

On-Chain Data Meets Natural Language

Now let's talk about the future direction, because this is where RAG becomes genuinely powerful for supply chain intelligence.

Right now, querying blockchain data requires GraphQL. You need to know the schema. You need to understand epochs, checkpoints, transaction outputs. You need a developer.

But what if you could just ask: "Show me all audit trail entries for Product SKU X in the last 6 months"?

That's what happens when you synchronize on-chain data with a RAG system.

IOTA's GraphQL RPC already exposes transaction data, events, object states. We can ingest that into a vector database. Now your compliance officer can query in plain English:

- "Which suppliers have updated their carbon footprint declarations this quarter?"

- "Show me all credential verifications for this batch of lithium batteries"

- "Has anyone tampered with the maintenance log for Asset ID 4729?"

The RAG system translates natural language into structured queries, retrieves the data, and formats it into a human-readable answer. With citations. With links back to the on-chain transaction.

And because blockchain data is immutable and timestamped, the answers are verifiable. "This credential was issued on 2025-08-15 at 14:23 UTC. Transaction hash: 0x7a9f..."

This is how you make blockchain useful beyond crypto enthusiasts. You make the data queryable by the people who need it, in the language they already speak.

Why Every Solution Architect Will Have a Knowledge Base

In 3 years, asking "Do you have a knowledge system?" will be like asking "Do you use version control?" in 2015.

The answer will be obvious. Of course we do. How else would we work?

Here's why:

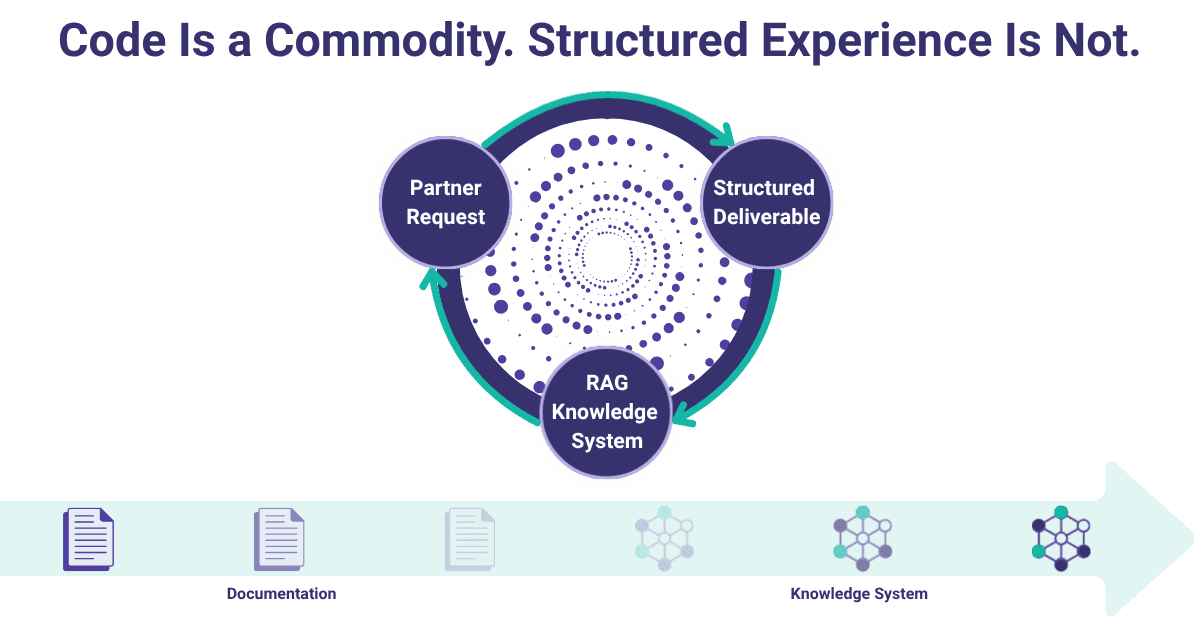

Models are commoditized. Knowledge differentiates.

Claude Opus costs $15/million tokens. GPT-4o costs $2.50/million tokens. In 12 months, there will be open-source models at 95% capability for pennies.

The model is not your moat. The curated expertise you feed it is.

Every system integrator, every solution architect, every consulting firm will build their own knowledge base. The question is: how complete is it? How well-structured? How often is it updated?

When you evaluate vendors, you'll ask:

- "What's in your knowledge base?"

- "How do you keep your technical documentation synced?"

- "Can I see the source attribution for that recommendation?"

Knowledge becomes exchangeable with clients. Imagine handing off a project and saying: "Here's the codebase. Here's the infrastructure. And here's the knowledge base we used to build it."

The client can query it. Extend it. Use it to onboard their internal team. The knowledge doesn't walk out the door when the consultants leave.

This is the shift from consulting as a service to consulting as infrastructure.

Exploring IOTA Trust Framework integration? Our knowledge base includes 2 years of architecture decisions, partner insights, and implementation patterns.

Request a Free ConsultationThe Real Product Isn't the Model

I started with a problem: I was billing clients for time spent re-learning things I already knew.

I built a RAG system because human time doesn't scale. Because models hallucinate without grounded knowledge. Because every blockchain integration starts with the same research phase, and that phase should be instant.

What I didn't expect: this becomes the competitive advantage.

Not the model we use. Not the prompts we write. The 2 years of IOTA architecture decisions, partner feedback, regulatory analysis, and implementation patterns we've indexed.

That knowledge base is the product.

So here's the reframe: when you're evaluating blockchain consultants, don't ask which LLM they use. Ask what knowledge they've curated.

Ask to see their documentation structure. Ask how they keep regulatory guidance up to date. Ask if they can retrieve past architecture decisions in seconds, or if they're Googling during your discovery call.

The time tax is optional now. The question is whether your vendor has eliminated it.

Is your blockchain consultant billing you for research, or building knowledge systems that compound over time?

Need help implementing AI?

Schedule a free consultation to explore how KChain Solutions can help your organization implement production-grade blockchain architecture.

Valerio Mellini

Founder & IOTA Foundation Solution Architect

10+ years in software architecture across Accenture, PwC, Wolters Kluwer, and Ubiquicom. Certified Blockchain Solutions Architect. Helping enterprises implement production-grade blockchain systems with architecture-first methodology.